Med tech evolution

Back in 1965, Intel’s Gordon Moore predicted the speed and capability of personal computers would increase every couple of years, and we would pay less for them. That prediction came true, and the eponymously-named Moore’s Law became accepted fact in the world of computer innovation.

Can the same be said for medical devices?

Tracking the journey from proof of concept through stages of innovation, there are plenty of examples where Moore’s Law applies to medical technology – not just in terms of capability, but also reduction of size, ease-of-use and device capabilities.

In the best cases, med tech evolution leads to devices becoming useable by people with no medical training. In other words, they evolve from being clinical use-only equipment, to personal devices.

If the purpose of medical technology is to aid improvement of health and wellbeing, a device’s evolution from clinical equipment to personal device is the goal.

Here are four examples of med tech evolution from being professional equipment, to personal device.

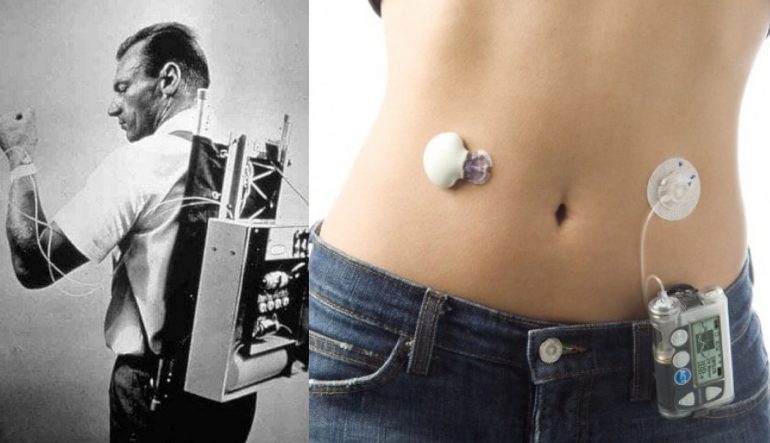

Insulin pumps

Invented by Arnold Kadish in 1974, the Biostator (pictured) measured blood glucose levels and dispensed insulin every five minutes. It represented a revolution in treatment of diabetes, and is an early example of wearable technology – although nothing like we’re used to today.

The Biostator was huge, and heavy. Designed as a backpack with multiple wires connecting to the wearer’s wrist, it integrated a computer, a computer operated pump, and a printer to plot blood glucose readings. An excellent proof of concept that had a long way to go before being ready for practical use.

The origins of other technology to help measure glucose levels and inject insulin stretches back as far as the 1950s. While not as cumbersome as the Biostator – an all-in-one device for monitoring and treatment of diabetes – they have been vastly improved in the decades since.

Today, Continuous Glucose Monitoring systems are small wearable sensors that link to smartphone apps for visualisation of data, and wearable pumps for automated insulin delivery. The future of such systems is that they will eventually become non-invasive; one example is a skin patch that reads sweat to monitor glucose levels.

Ultrasonography

While known examples of ultrasonic method and machine applications in gynaecology hark back as far as the early 1800s, Scottish obstetrician gynaecologist Ian Donald is recognised as the inventor of the first 2D ultrasound scanning machine.

The Diasonograph was the subject of Team Donald’s 1958 paper on ultrasound scanning techniques in The Lancet. This led to almost a race of static scanning technology innovation over the following decade.

British and US hospitals started to adopt the technology in the 1970s. By the end of the 20th Century, ultrasound technology was commonplace in maternity clinics across the developed world. Next came the arrival of real-time 3D and 4D ultrasound imaging.

The dominant step-change in adoption was less about the image itself, and more about the size and usability of sonographic equipment.

What began as room-filling scanning, computation and imaging equipment is today, hand-held. Now, an ultrasound device the size of a TV remote shares images in real-time to a paired tablet or smartphone.

While these devices cost a few thousand on average, that’s a tiny fraction of the cost of earlier, or even today’s hospital-grade equipment. This not only enables personal ownership, but also removes barriers to general practitioners and other medical professionals to adopt the technology.

Hearing aids

Cow and ram horns were used as hearing amplifiers as early as the 13th century. The ear horn was invented in the early 1800s. A little over 150 years later, Thomas Edison got on the case.

In 1870, Edison invented a carbon transmitter for the telephone that acted as an amplifier. This was improved upon using vacuum tube technology in the first half of the 20th century.

The first wearable hearing aid was invented in 1938 – although while the earpiece, wire and receiver were portable, the battery pack that powered it all had to be strapped to the user’s leg. It was the 1950s before a small, one-piece hearing aid was invented by engineer, Norman Krim.

The invention of the microprocessor led to miniaturisation of self-contained hearing aids, turning them into virtual microcomputers. Today’s hearing aids are unobtrusive as to be almost unnoticeable to the observer – with the future likely to bring devices that can be implanted in the ear canal, powered by the human body.

AEDs

The brief history of defibrillation recently shared by Dr Melinda Stanners demonstrated a similar trajectory from hospital application to personal device.

The effectiveness of electric shocks to reverse ventricular fibrillation was first demonstrated in 1899. It took more than a century for this method to evolve into the first automatic external defibrillator (AED) being made available to the public in 2004.

Our own redesign of AED technology represents the first significant innovation in this space for decades. In developing a device that is smaller, lighter, easier to use, smarter and more affordable, we have established CellAED as the world’s first personal AED.

RELATED ARTICLE: The future of defibrillation isn’t science fiction

The opportunity for evolution of defibrillators from clinical equipment to personal device is that the low survival numbers associated with out-of-hospital cardiac arrest can be improved.

Key to achieving this is making AEDs smarter, easier to apply under pressure, and inexpensive enough for average households to be able to afford one.

DISCLAIMER: CellAED is currently only approved for use in the member states of the EU (European Union). If you would like to be kept informed on when regulatory approval has been secured for your location, register your interest here.